Q-Review Analytics

There is a large amount of data accessible to instructors in the Q-Review software, Echo360. This includes: total number of video views, total note events (for notes which students have typed alongside the recordings), engagement scores (which can be configured by applying weightings to the metrics), as well as the number of confusion flags raised by students. This information can be viewed either by lecture or by student.

This guide covers how to:

- Access the analytics for Q-Review recordings

- Interpret the data shown

- Change the weightings for the engagement score

It assumes you have access as an instructor to a Q-Review section

Accessing Q-Review analytics

- Navigate to the QMplus page which you wish to access the lecture recording analytics for

- Click on the Q-Review tool to launch it

- Click on the analytics tab in the top right corner of the screen

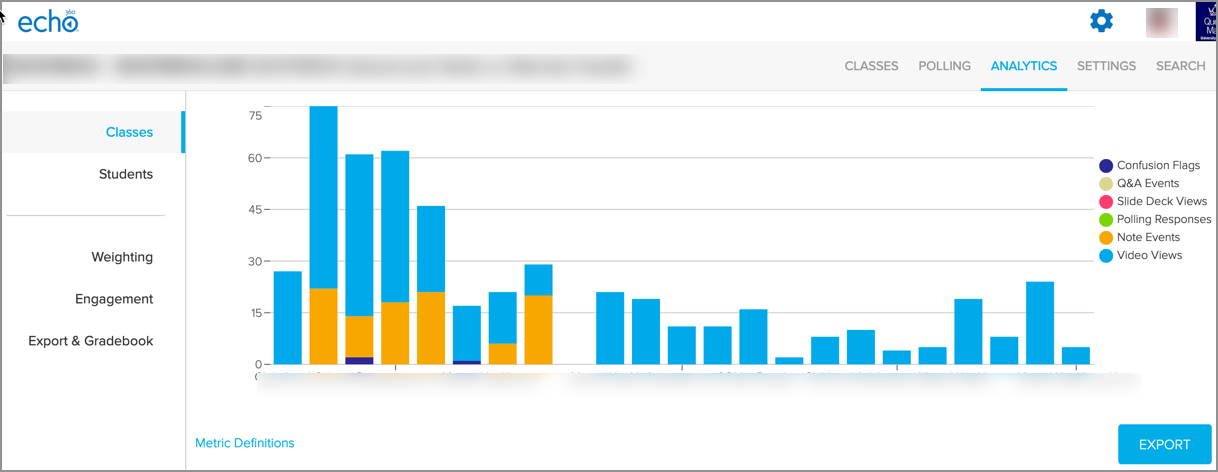

- One can now see the following, which is a typical example of statistics available for interaction with Q-Review recordings:

Each of the bars in this graph represents a lecture recording and for each, one can see the total number of video views, note events, any polling responses, slide deck views (for presentation material uploaded separately), Q&A events & confusion flags. For this example above, we can see where there is the greatest interaction (with weeks 2-5 receiving a larger number of video view instances than the other weeks), a number of recordings receiving approximately 15 instances of notes being taken and weeks 3 & 6 having confusion flags raised against some of the content.

Each of the bars in this graph represents a lecture recording and for each, one can see the total number of video views, note events, any polling responses, slide deck views (for presentation material uploaded separately), Q&A events & confusion flags. For this example above, we can see where there is the greatest interaction (with weeks 2-5 receiving a larger number of video view instances than the other weeks), a number of recordings receiving approximately 15 instances of notes being taken and weeks 3 & 6 having confusion flags raised against some of the content. - If one hovers the mouse over the class’s bar on the graph, one can see accurate numbers for each of the elements shown:

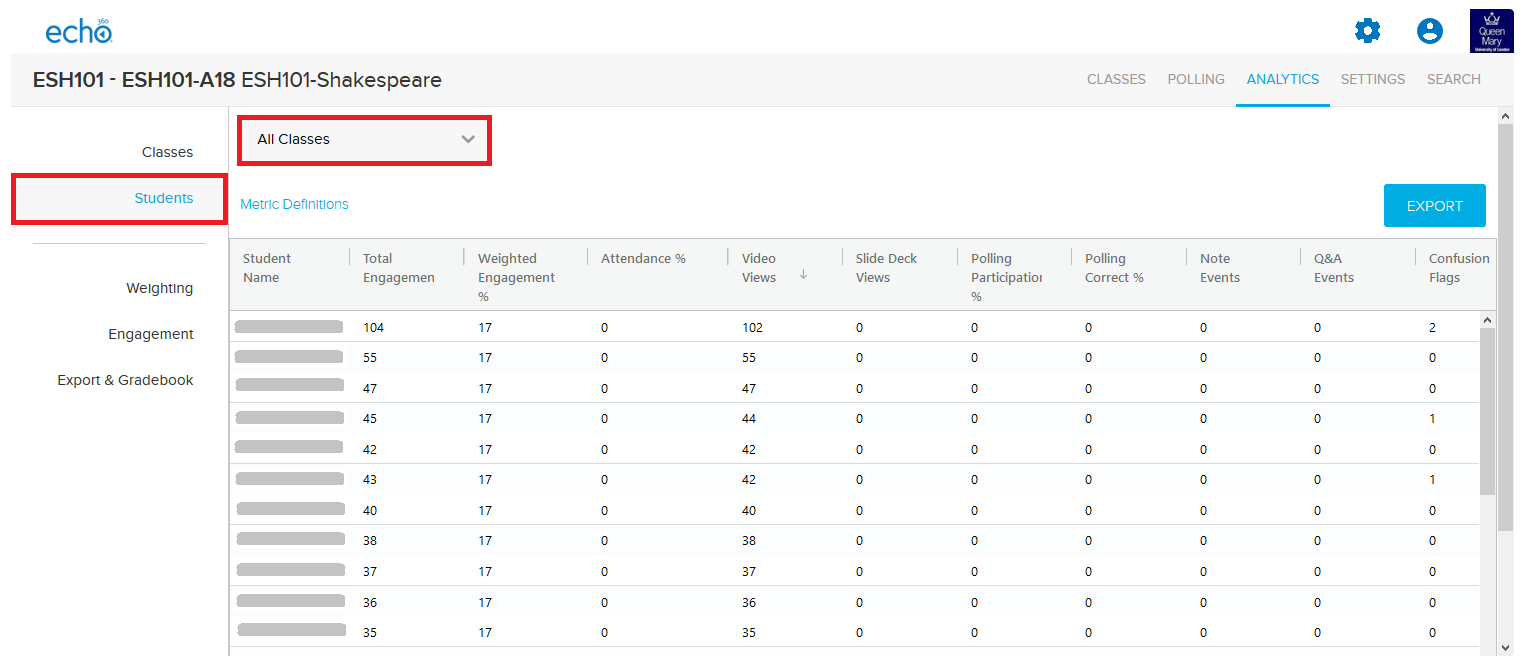

- If one clicks on ‘Students’ to the left hand side of the graph, one can then view the analytics for each of the students who have interacted with the Q-Review Tool on QMplus. (Please note, the list of students will only include those who have clicked upon the Q-Review tool or have been manually added, rather than all who are enrolled on the QMplus course area). For this example, I have anonymised the students names & the data shown is for ‘all classes’:

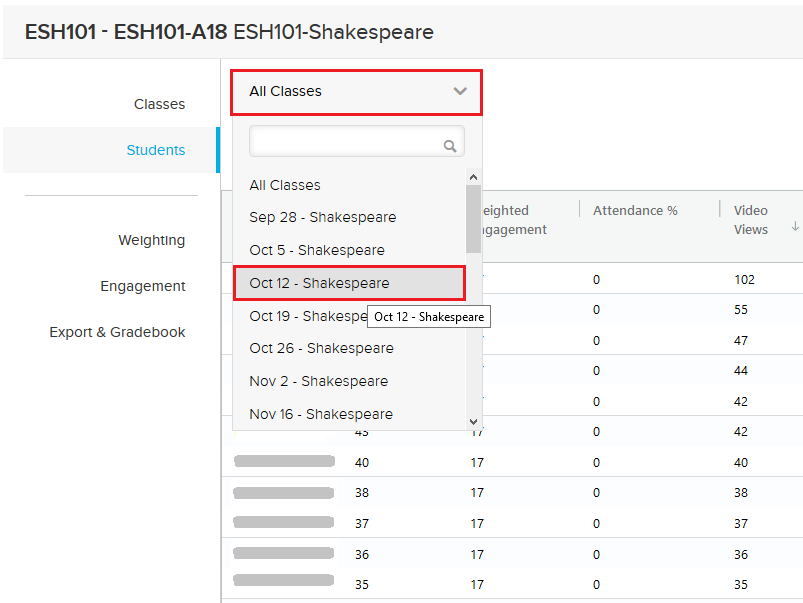

Should one wish to view the analytics for a particular class’ recording, then the recording can be selected via the drop down menu, as shown here:

Interpreting the data shown

As with any data, it is important to be mindful that there are a number of variables at play as to why there are peaks or troughs for particular weeks’ recordings. In the past, significant factors have been, but not limited to:

- Complex material being taught

- Assessments being delivered on a particular topic

- Students not being able to attend class (due to tube strikes, for example)

- Cancelled lectures

- Lectures for which the recorded content is not so useful

- Audio visual issues (resulting in audio/video not being present)

Engagement Scores

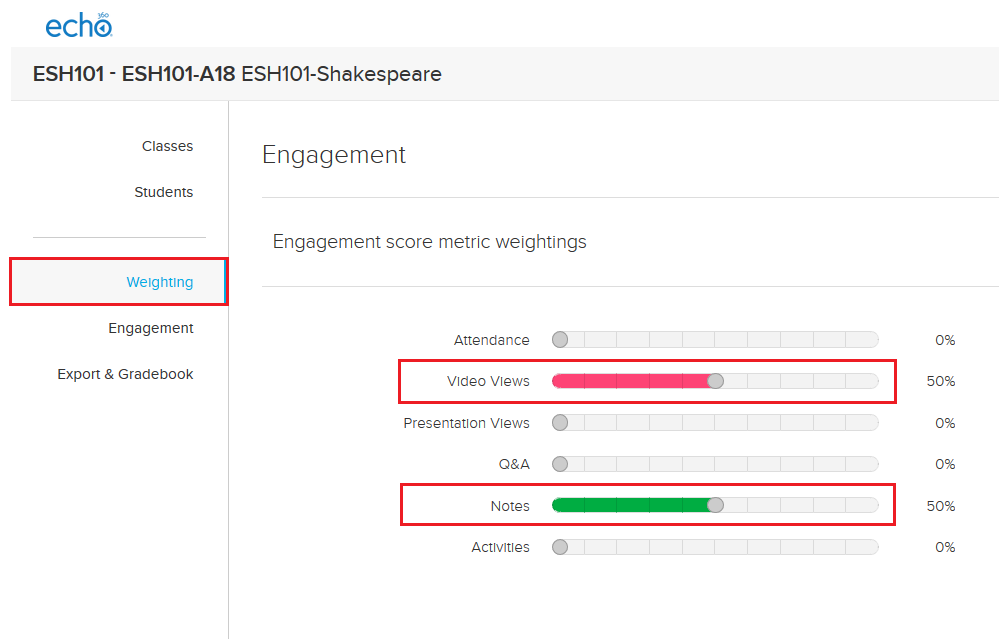

The engagement scores which are available for classes and students, are again a value which should be interpreted carefully by the instructor(s). A low engagement score does not necessarily mean the student is at risk of discontinuing, as the student may have interacted very little with the recording, taken notes offline (rather than taking notes alongside the Q-Review lecture capture) and may have understood all of the content delivered. To get more meaningful data from the engagement score, instructors have the ability to adjust the metrics which are used to generate the engagement scores, via the ‘weighting’, as shown here:  For this example above, I have have enabled only the metrics for ‘video views’ and ‘notes’, as the data for the other options which are available to adjust were not relevant in this instance. Should I be more interested in video views than notes taken, I could adjust these toggles accordingly to give video views more weight in the engagement score generated.

For this example above, I have have enabled only the metrics for ‘video views’ and ‘notes’, as the data for the other options which are available to adjust were not relevant in this instance. Should I be more interested in video views than notes taken, I could adjust these toggles accordingly to give video views more weight in the engagement score generated.

Did this answer your query? If not, you can raise a ticket on the online Helpdesk or email: its-helpdesk@qmul.ac.uk . Alternatively you can also request a particular guide or highlight an error in this guide using our guides request tracker.

Produced by the the Technology Enhanced Learning Team at Queen Mary University of London.